February 27, 2020 | Internet of Things, Machine Vision, Robotics & Automation

Machine Vision Promises Nearly Flawless Quality Control

Robots are capable of incredible feats well beyond human abilities: Guinness World Records recognizes the Fanuc Corporation’s M-2000iA/2300 Super Heavy Payload Robot as the world’s strongest, capable of moving objects that weigh more than 5,000 pounds.

Strength has long made robots a staple of assembly lines, where their immense power transcends human limitations. But companies are discovering that robots can be leveraged not just to overcome human physical limitations, but mental limitations as well.

(Human) Mistakes Happen

A prime example of this capacity for robotics to overcome human limitations is quality control, where mistakes can lead to returned products, rush orders and even damaged reputations. Humans have been traditionally tasked with quality control because it requires judgment. Is this bottle filled with enough water to satisfy customers as being “full?” Is it overfilled to the point that it may compromise the capping process? A human can easily make that determination, even as a bottle rapidly moves through a plant.

But an interesting thing happens when a human views not one bottle but hundreds of bottles, let alone thousands of bottles streaming along a high-speed bottling line. After repeatedly seeing an image, that image gets imprinted on the brain. So when an inspector sees a number of bottles at the proper fill level and then sees a bottle that’s half-empty, the inspector’s eyes send that signal to the brain — but the brain may instead use the imprinted image of a full bottle and not register a problem.

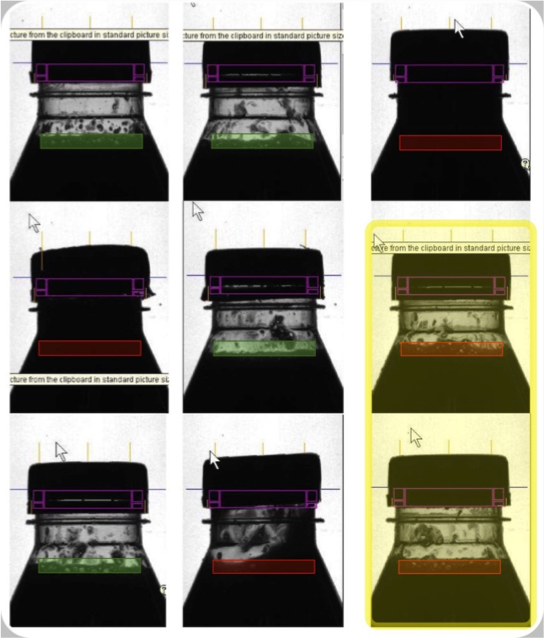

Machine vision can be used to determine when a bottle is too full or not full enough (both inadequacies indicated by a red rectangle in the above images).

Machine vision can be used to determine when a bottle is too full or not full enough (both inadequacies indicated by a red rectangle in the above images).

Machine vision, where a camera system captures images and software analyzes them, overcomes this human limitation. A human being is simply not as ideally suited for repetitive tasks as a machine, and manufacturers have taken notice. For example, Heineken now uses R&D Vision’s machine vision system at a beer-bottling facility in France, where it inspects 80,000 bottles per hour and practically achieves a 0% failure rate. Machine vision can also be used to inspect everything from the threads of a pipe to product surface defects to component alignment.

How Machine Vision Works

Machine vision is composed of a digital camera, lighting and optics, coupled with software that processes images. The electronic “brain” of the system evaluates the image and then takes action based on the analyzed data. On the hardware front, cameras, optics and lighting systems have advanced to capture precise image information, even under challenging conditions. In some, for example, if an image doesn’t have good contrast under a white light, banks of LEDs can strobe through red, green and blue lights to find a workable wavelength — as well as change the intensity and frequency — in milliseconds.

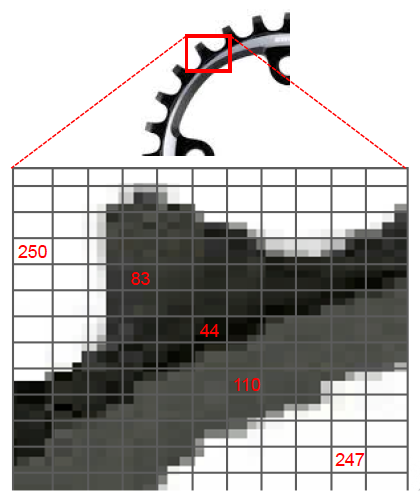

Once a workable image is captured, its pixels are assigned a numerical value. In grayscale imaging, a bright white pixel is given a level of 255; a completely black pixel is assigned a grayscale value of zero. Once the software system assigns numerical values to all the pixels, it looks for patterns and edges, and combines that edge data to identify shapes. This is the underlying software that enables a vision system to detect and identify bolt holes, their precise location on a part, and whether they’re the correct size to pass the inspection.

Machine vision assigns numerical values to all the pixels in a grayscale image. Software can then identify irregularities and flag products that don’t meet quality standards.

Machine vision assigns numerical values to all the pixels in a grayscale image. Software can then identify irregularities and flag products that don’t meet quality standards.

Unlocking the Potential of Smarter Machines

In the last 10 years, we’ve seen artificial intelligence (AI) advance beyond traditional machine software where a programmer has to identify what features should register as key characteristics for inspection. A sprocket can be easily identified with traditional vision software because it has a defined shape with minimal variation. But what about objects whose appearance can vary widely?

With a large enough dataset, AI systems can learn nuances without being explicitly programmed. Blue River Technology in Sunnyvale, Calif., for example, couples computer vision and machine learning to create its See & Spray weed-control system. As tractors pull herbicide sprayers across cotton crops, cameras see each plant and determine whether it’s a valuable plant or a problematic weed, and then applies herbicide to individual weeds. By administering a micro-dose of herbicide to just weeds instead of the shotgun approach of crop dusting, See & Spray reduces herbicide costs by 90 percent and eliminates the leeching of harmful chemicals into the environment.

Artificial intelligence enables machine vision to be applied in unconventional ways. It may take thousands of lines of code for a machine vision system to account for the many sizes and shapes of a green bean, but AI algorithms can be trained to discern the difference between a green bean and a long stalk of grass or a leaf, as well as the variations in cursive script, enabling the machine identification of handwritten numbers and letters.

Closing the Quality Gap

Multiple quality control studies performed from the 1970s to the present show that human inspectors consistently find only 80% of the defects in manufactured parts. So if you’re using human inspectors, there is a good chance that 20% of your defects are going to sneak through, which is a stunning realization when machine vision systems capable of achieving close to 100% defect detection can be implemented for as little as $10,000.

Even if you can’t invest in full robotic automation, a machine vision system can still enhance quality control by tirelessly flagging problems and freeing inspectors to do what humans do best — fixing those problems. Contact us to learn more about machine vision and how it can increase the efficiency and production of your plant.

This story originally appeared in IndustryWeek.